mirror of

https://github.com/Azure/cosmos-explorer.git

synced 2025-12-26 12:21:23 +00:00

Compare commits

74 Commits

remove-rup

...

aad-fix

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

cfbbf115f1 | ||

|

|

a298fd8389 | ||

|

|

1ecc467f60 | ||

|

|

b3cafe3468 | ||

|

|

4be53284b5 | ||

|

|

c1937ca464 | ||

|

|

2b2de7c645 | ||

|

|

8c40df0fa1 | ||

|

|

fcbc9474ea | ||

|

|

81f861af39 | ||

|

|

9afa29cdb6 | ||

|

|

9a1e8b2d87 | ||

|

|

babda4d9cb | ||

|

|

9d20a13dd4 | ||

|

|

3effbe6991 | ||

|

|

af53697ff4 | ||

|

|

b1ad80480e | ||

|

|

9247a6c4a2 | ||

|

|

767d46480e | ||

|

|

2d98c5d269 | ||

|

|

6627172a52 | ||

|

|

19fa5e17a5 | ||

|

|

a4a367a212 | ||

|

|

983c9201bb | ||

|

|

76d7f00a90 | ||

|

|

6490597736 | ||

|

|

229119e697 | ||

|

|

ceefd7c615 | ||

|

|

6e619175c6 | ||

|

|

08e8bf4bcf | ||

|

|

89dc0f394b | ||

|

|

30e0001b7f | ||

|

|

4a8f408112 | ||

|

|

e801364800 | ||

|

|

a55f2d0de9 | ||

|

|

d40b1aa9b5 | ||

|

|

cc63cdc1fd | ||

|

|

c3058ee5a9 | ||

|

|

b000631a0c | ||

|

|

e8f4c8f93c | ||

|

|

16bde97e47 | ||

|

|

6da43ee27b | ||

|

|

ebae484b8f | ||

|

|

dfb1b50621 | ||

|

|

f54e8eb692 | ||

|

|

ea39c1d092 | ||

|

|

c21f42159f | ||

|

|

31e4b49f11 | ||

|

|

40491ec9c5 | ||

|

|

e133df18dd | ||

|

|

0532ed26a2 | ||

|

|

fd60c9c15e | ||

|

|

04ab1f3918 | ||

|

|

b784ac0f86 | ||

|

|

28899f63d7 | ||

|

|

9cbf632577 | ||

|

|

17fd2185dc | ||

|

|

a93c8509cd | ||

|

|

5c93c11bd9 | ||

|

|

85d2378d3a | ||

|

|

84b6075ee8 | ||

|

|

d880723be9 | ||

|

|

4ce9dcc024 | ||

|

|

addcfedd5e | ||

|

|

a133134b8b | ||

|

|

79dec6a8a8 | ||

|

|

53a8cea95e | ||

|

|

5f1f7a8266 | ||

|

|

a009a8ba5f | ||

|

|

3e782527d0 | ||

|

|

e6ca1d25c9 | ||

|

|

473f722dcc | ||

|

|

5741802c25 | ||

|

|

e2e58f73b1 |

11

.env.example

11

.env.example

@@ -1,7 +1,14 @@

|

|||||||

# These options are only needed when if running end to end tests locally

|

|

||||||

PORTAL_RUNNER_USERNAME=

|

PORTAL_RUNNER_USERNAME=

|

||||||

PORTAL_RUNNER_PASSWORD=

|

PORTAL_RUNNER_PASSWORD=

|

||||||

PORTAL_RUNNER_SUBSCRIPTION=

|

PORTAL_RUNNER_SUBSCRIPTION=

|

||||||

PORTAL_RUNNER_RESOURCE_GROUP=

|

PORTAL_RUNNER_RESOURCE_GROUP=

|

||||||

PORTAL_RUNNER_DATABASE_ACCOUNT=

|

PORTAL_RUNNER_DATABASE_ACCOUNT=

|

||||||

PORTAL_RUNNER_CONNECTION_STRING=

|

PORTAL_RUNNER_DATABASE_ACCOUNT_KEY=

|

||||||

|

PORTAL_RUNNER_CONNECTION_STRING=

|

||||||

|

NOTEBOOKS_TEST_RUNNER_TENANT_ID=

|

||||||

|

NOTEBOOKS_TEST_RUNNER_CLIENT_ID=

|

||||||

|

NOTEBOOKS_TEST_RUNNER_CLIENT_SECRET=

|

||||||

|

CASSANDRA_CONNECTION_STRING=

|

||||||

|

MONGO_CONNECTION_STRING=

|

||||||

|

TABLES_CONNECTION_STRING=

|

||||||

|

DATA_EXPLORER_ENDPOINT=https://localhost:1234/hostedExplorer.html

|

||||||

@@ -14,9 +14,6 @@ src/Common/DataAccessUtilityBase.ts

|

|||||||

src/Common/DeleteFeedback.ts

|

src/Common/DeleteFeedback.ts

|

||||||

src/Common/DocumentClientUtilityBase.ts

|

src/Common/DocumentClientUtilityBase.ts

|

||||||

src/Common/EditableUtility.ts

|

src/Common/EditableUtility.ts

|

||||||

src/Common/EnvironmentUtility.ts

|

|

||||||

src/Common/ErrorParserUtility.test.ts

|

|

||||||

src/Common/ErrorParserUtility.ts

|

|

||||||

src/Common/HashMap.test.ts

|

src/Common/HashMap.test.ts

|

||||||

src/Common/HashMap.ts

|

src/Common/HashMap.ts

|

||||||

src/Common/HeadersUtility.test.ts

|

src/Common/HeadersUtility.test.ts

|

||||||

@@ -45,7 +42,6 @@ src/Contracts/ViewModels.ts

|

|||||||

src/Controls/Heatmap/Heatmap.test.ts

|

src/Controls/Heatmap/Heatmap.test.ts

|

||||||

src/Controls/Heatmap/Heatmap.ts

|

src/Controls/Heatmap/Heatmap.ts

|

||||||

src/Controls/Heatmap/HeatmapDatatypes.ts

|

src/Controls/Heatmap/HeatmapDatatypes.ts

|

||||||

src/Definitions/adal.d.ts

|

|

||||||

src/Definitions/datatables.d.ts

|

src/Definitions/datatables.d.ts

|

||||||

src/Definitions/gif.d.ts

|

src/Definitions/gif.d.ts

|

||||||

src/Definitions/globals.d.ts

|

src/Definitions/globals.d.ts

|

||||||

@@ -204,8 +200,6 @@ src/Explorer/Tabs/QueryTab.test.ts

|

|||||||

src/Explorer/Tabs/QueryTab.ts

|

src/Explorer/Tabs/QueryTab.ts

|

||||||

src/Explorer/Tabs/QueryTablesTab.ts

|

src/Explorer/Tabs/QueryTablesTab.ts

|

||||||

src/Explorer/Tabs/ScriptTabBase.ts

|

src/Explorer/Tabs/ScriptTabBase.ts

|

||||||

src/Explorer/Tabs/SettingsTab.test.ts

|

|

||||||

src/Explorer/Tabs/SettingsTab.ts

|

|

||||||

src/Explorer/Tabs/SparkMasterTab.ts

|

src/Explorer/Tabs/SparkMasterTab.ts

|

||||||

src/Explorer/Tabs/StoredProcedureTab.ts

|

src/Explorer/Tabs/StoredProcedureTab.ts

|

||||||

src/Explorer/Tabs/TabComponents.ts

|

src/Explorer/Tabs/TabComponents.ts

|

||||||

@@ -247,9 +241,6 @@ src/Platform/Hosted/Authorization.ts

|

|||||||

src/Platform/Hosted/DataAccessUtility.ts

|

src/Platform/Hosted/DataAccessUtility.ts

|

||||||

src/Platform/Hosted/ExplorerFactory.ts

|

src/Platform/Hosted/ExplorerFactory.ts

|

||||||

src/Platform/Hosted/Helpers/ConnectionStringParser.test.ts

|

src/Platform/Hosted/Helpers/ConnectionStringParser.test.ts

|

||||||

src/Platform/Hosted/Helpers/ConnectionStringParser.ts

|

|

||||||

src/Platform/Hosted/HostedUtils.test.ts

|

|

||||||

src/Platform/Hosted/HostedUtils.ts

|

|

||||||

src/Platform/Hosted/Main.ts

|

src/Platform/Hosted/Main.ts

|

||||||

src/Platform/Hosted/Maint.test.ts

|

src/Platform/Hosted/Maint.test.ts

|

||||||

src/Platform/Hosted/NotificationsClient.ts

|

src/Platform/Hosted/NotificationsClient.ts

|

||||||

@@ -292,8 +283,6 @@ src/Utils/DatabaseAccountUtils.ts

|

|||||||

src/Utils/JunoUtils.ts

|

src/Utils/JunoUtils.ts

|

||||||

src/Utils/MessageValidation.ts

|

src/Utils/MessageValidation.ts

|

||||||

src/Utils/NotebookConfigurationUtils.ts

|

src/Utils/NotebookConfigurationUtils.ts

|

||||||

src/Utils/OfferUtils.test.ts

|

|

||||||

src/Utils/OfferUtils.ts

|

|

||||||

src/Utils/PricingUtils.test.ts

|

src/Utils/PricingUtils.test.ts

|

||||||

src/Utils/QueryUtils.test.ts

|

src/Utils/QueryUtils.test.ts

|

||||||

src/Utils/QueryUtils.ts

|

src/Utils/QueryUtils.ts

|

||||||

@@ -398,19 +387,5 @@ src/Explorer/Tree/ResourceTreeAdapterForResourceToken.tsx

|

|||||||

src/GalleryViewer/Cards/GalleryCardComponent.tsx

|

src/GalleryViewer/Cards/GalleryCardComponent.tsx

|

||||||

src/GalleryViewer/GalleryViewer.tsx

|

src/GalleryViewer/GalleryViewer.tsx

|

||||||

src/GalleryViewer/GalleryViewerComponent.tsx

|

src/GalleryViewer/GalleryViewerComponent.tsx

|

||||||

cypress/integration/dataexplorer/CASSANDRA/addCollection.spec.ts

|

|

||||||

cypress/integration/dataexplorer/GRAPH/addCollection.spec.ts

|

|

||||||

cypress/integration/dataexplorer/ci-tests/addCollectionPane.spec.ts

|

|

||||||

cypress/integration/dataexplorer/ci-tests/createDatabase.spec.ts

|

|

||||||

cypress/integration/dataexplorer/ci-tests/deleteCollection.spec.ts

|

|

||||||

cypress/integration/dataexplorer/ci-tests/deleteDatabase.spec.ts

|

|

||||||

cypress/integration/dataexplorer/MONGO/addCollection.spec.ts

|

|

||||||

cypress/integration/dataexplorer/MONGO/addCollectionAutopilot.spec.ts

|

|

||||||

cypress/integration/dataexplorer/MONGO/addCollectionExistingDatabase.spec.ts

|

|

||||||

cypress/integration/dataexplorer/MONGO/provisionDatabaseThroughput.spec.ts

|

|

||||||

cypress/integration/dataexplorer/SQL/addCollection.spec.ts

|

|

||||||

cypress/integration/dataexplorer/TABLE/addCollection.spec.ts

|

|

||||||

cypress/integration/notebook/newNotebook.spec.ts

|

|

||||||

cypress/integration/notebook/resourceTree.spec.ts

|

|

||||||

__mocks__/monaco-editor.ts

|

__mocks__/monaco-editor.ts

|

||||||

src/Explorer/Tree/ResourceTreeAdapterForResourceToken.test.tsx

|

src/Explorer/Tree/ResourceTreeAdapterForResourceToken.test.tsx

|

||||||

31

.eslintrc.js

31

.eslintrc.js

@@ -1,41 +1,39 @@

|

|||||||

module.exports = {

|

module.exports = {

|

||||||

env: {

|

env: {

|

||||||

browser: true,

|

browser: true,

|

||||||

es6: true

|

es6: true,

|

||||||

},

|

},

|

||||||

plugins: ["@typescript-eslint", "no-null", "prefer-arrow"],

|

plugins: ["@typescript-eslint", "no-null", "prefer-arrow"],

|

||||||

extends: ["eslint:recommended", "plugin:@typescript-eslint/recommended"],

|

extends: ["eslint:recommended", "plugin:@typescript-eslint/recommended"],

|

||||||

globals: {

|

globals: {

|

||||||

Atomics: "readonly",

|

Atomics: "readonly",

|

||||||

SharedArrayBuffer: "readonly"

|

SharedArrayBuffer: "readonly",

|

||||||

},

|

},

|

||||||

parser: "@typescript-eslint/parser",

|

parser: "@typescript-eslint/parser",

|

||||||

parserOptions: {

|

parserOptions: {

|

||||||

ecmaFeatures: {

|

ecmaFeatures: {

|

||||||

jsx: true

|

jsx: true,

|

||||||

},

|

},

|

||||||

ecmaVersion: 2018,

|

ecmaVersion: 2018,

|

||||||

sourceType: "module"

|

sourceType: "module",

|

||||||

},

|

},

|

||||||

overrides: [

|

overrides: [

|

||||||

{

|

{

|

||||||

files: ["**/*.tsx"],

|

files: ["**/*.tsx"],

|

||||||

env: {

|

extends: ["plugin:react/recommended"], // TODO: Add react-hooks

|

||||||

jest: true

|

plugins: ["react"],

|

||||||

},

|

|

||||||

extends: ["plugin:react/recommended"],

|

|

||||||

plugins: ["react"]

|

|

||||||

},

|

},

|

||||||

{

|

{

|

||||||

files: ["**/*.{test,spec}.{ts,tsx}"],

|

files: ["**/*.{test,spec}.{ts,tsx}"],

|

||||||

env: {

|

env: {

|

||||||

jest: true

|

jest: true,

|

||||||

},

|

},

|

||||||

extends: ["plugin:jest/recommended"],

|

extends: ["plugin:jest/recommended"],

|

||||||

plugins: ["jest"]

|

plugins: ["jest"],

|

||||||

}

|

},

|

||||||

],

|

],

|

||||||

rules: {

|

rules: {

|

||||||

|

"no-console": ["error", { allow: ["error", "warn", "dir"] }],

|

||||||

curly: "error",

|

curly: "error",

|

||||||

"@typescript-eslint/no-unused-vars": "error",

|

"@typescript-eslint/no-unused-vars": "error",

|

||||||

"@typescript-eslint/no-extraneous-class": "error",

|

"@typescript-eslint/no-extraneous-class": "error",

|

||||||

@@ -43,12 +41,13 @@ module.exports = {

|

|||||||

"@typescript-eslint/no-explicit-any": "error",

|

"@typescript-eslint/no-explicit-any": "error",

|

||||||

"prefer-arrow/prefer-arrow-functions": ["error", { allowStandaloneDeclarations: true }],

|

"prefer-arrow/prefer-arrow-functions": ["error", { allowStandaloneDeclarations: true }],

|

||||||

eqeqeq: "error",

|

eqeqeq: "error",

|

||||||

|

"react/display-name": "off",

|

||||||

"no-restricted-syntax": [

|

"no-restricted-syntax": [

|

||||||

"error",

|

"error",

|

||||||

{

|

{

|

||||||

selector: "CallExpression[callee.object.name='JSON'][callee.property.name='stringify'] Identifier[name=/$err/]",

|

selector: "CallExpression[callee.object.name='JSON'][callee.property.name='stringify'] Identifier[name=/$err/]",

|

||||||

message: "Do not use JSON.stringify(error). It will print '{}'"

|

message: "Do not use JSON.stringify(error). It will print '{}'",

|

||||||

}

|

},

|

||||||

]

|

],

|

||||||

}

|

},

|

||||||

};

|

};

|

||||||

|

|||||||

48

.github/workflows/ci.yml

vendored

48

.github/workflows/ci.yml

vendored

@@ -79,32 +79,32 @@ jobs:

|

|||||||

name: dist

|

name: dist

|

||||||

path: dist/

|

path: dist/

|

||||||

endtoendemulator:

|

endtoendemulator:

|

||||||

name: "End To End Tests | Emulator | SQL"

|

name: "End To End Emulator Tests"

|

||||||

needs: [lint, format, compile, unittest]

|

needs: [lint, format, compile, unittest]

|

||||||

runs-on: windows-latest

|

runs-on: windows-latest

|

||||||

steps:

|

steps:

|

||||||

- uses: actions/checkout@v2

|

- uses: actions/checkout@v2

|

||||||

- uses: southpolesteve/cosmos-emulator-github-action@v1

|

|

||||||

- name: Use Node.js 12.x

|

- name: Use Node.js 12.x

|

||||||

uses: actions/setup-node@v1

|

uses: actions/setup-node@v1

|

||||||

with:

|

with:

|

||||||

node-version: 12.x

|

node-version: 12.x

|

||||||

- name: Restore Cypress Binary Cache

|

- uses: southpolesteve/cosmos-emulator-github-action@v1

|

||||||

uses: actions/cache@v2

|

|

||||||

with:

|

|

||||||

path: ~/.cache/Cypress

|

|

||||||

key: ${{ runner.os }}-cypress-binary-cache

|

|

||||||

- name: End to End Tests

|

- name: End to End Tests

|

||||||

run: |

|

run: |

|

||||||

npm ci

|

npm ci

|

||||||

npm start &

|

npm start &

|

||||||

npm ci --prefix ./cypress

|

npm run wait-for-server

|

||||||

npm run test:ci --prefix ./cypress -- --spec ./integration/dataexplorer/ci-tests/createDatabase.spec.ts

|

npx jest -c ./jest.config.e2e.js --detectOpenHandles test/sql/container.spec.ts

|

||||||

shell: bash

|

shell: bash

|

||||||

env:

|

env:

|

||||||

EMULATOR_ENDPOINT: https://0.0.0.0:8081/

|

DATA_EXPLORER_ENDPOINT: "https://localhost:1234/explorer.html?platform=Emulator"

|

||||||

|

PLATFORM: "Emulator"

|

||||||

NODE_TLS_REJECT_UNAUTHORIZED: 0

|

NODE_TLS_REJECT_UNAUTHORIZED: 0

|

||||||

CYPRESS_CACHE_FOLDER: ~/.cache/Cypress

|

- uses: actions/upload-artifact@v2

|

||||||

|

if: failure()

|

||||||

|

with:

|

||||||

|

name: screenshots

|

||||||

|

path: failed-*

|

||||||

accessibility:

|

accessibility:

|

||||||

name: "Accessibility | Hosted"

|

name: "Accessibility | Hosted"

|

||||||

needs: [lint, format, compile, unittest]

|

needs: [lint, format, compile, unittest]

|

||||||

@@ -123,13 +123,13 @@ jobs:

|

|||||||

sudo sysctl -p

|

sudo sysctl -p

|

||||||

npm ci

|

npm ci

|

||||||

npm start &

|

npm start &

|

||||||

npx wait-on -i 5000 https-get://0.0.0.0:1234/

|

npx wait-on -i 5000 https-get://0.0.0.0:1234/

|

||||||

node utils/accesibilityCheck.js

|

node utils/accesibilityCheck.js

|

||||||

shell: bash

|

shell: bash

|

||||||

env:

|

env:

|

||||||

NODE_TLS_REJECT_UNAUTHORIZED: 0

|

NODE_TLS_REJECT_UNAUTHORIZED: 0

|

||||||

endtoendpuppeteer:

|

endtoendhosted:

|

||||||

name: "End to end puppeteer tests"

|

name: "End to End Hosted Tests"

|

||||||

needs: [lint, format, compile, unittest]

|

needs: [lint, format, compile, unittest]

|

||||||

runs-on: ubuntu-latest

|

runs-on: ubuntu-latest

|

||||||

steps:

|

steps:

|

||||||

@@ -138,7 +138,7 @@ jobs:

|

|||||||

uses: actions/setup-node@v1

|

uses: actions/setup-node@v1

|

||||||

with:

|

with:

|

||||||

node-version: 12.x

|

node-version: 12.x

|

||||||

- name: End to End Puppeteer Tests

|

- name: End to End Hosted Tests

|

||||||

run: |

|

run: |

|

||||||

npm ci

|

npm ci

|

||||||

npm start &

|

npm start &

|

||||||

@@ -147,13 +147,27 @@ jobs:

|

|||||||

shell: bash

|

shell: bash

|

||||||

env:

|

env:

|

||||||

NODE_TLS_REJECT_UNAUTHORIZED: 0

|

NODE_TLS_REJECT_UNAUTHORIZED: 0

|

||||||

|

PORTAL_RUNNER_SUBSCRIPTION: ${{ secrets.PORTAL_RUNNER_SUBSCRIPTION }}

|

||||||

|

PORTAL_RUNNER_RESOURCE_GROUP: ${{ secrets.PORTAL_RUNNER_RESOURCE_GROUP }}

|

||||||

|

PORTAL_RUNNER_DATABASE_ACCOUNT: ${{ secrets.PORTAL_RUNNER_DATABASE_ACCOUNT }}

|

||||||

|

PORTAL_RUNNER_DATABASE_ACCOUNT_KEY: ${{ secrets.PORTAL_RUNNER_DATABASE_ACCOUNT_KEY }}

|

||||||

|

NOTEBOOKS_TEST_RUNNER_TENANT_ID: ${{ secrets.NOTEBOOKS_TEST_RUNNER_TENANT_ID }}

|

||||||

|

NOTEBOOKS_TEST_RUNNER_CLIENT_ID: ${{ secrets.NOTEBOOKS_TEST_RUNNER_CLIENT_ID }}

|

||||||

|

NOTEBOOKS_TEST_RUNNER_CLIENT_SECRET: ${{ secrets.NOTEBOOKS_TEST_RUNNER_CLIENT_SECRET }}

|

||||||

PORTAL_RUNNER_CONNECTION_STRING: ${{ secrets.CONNECTION_STRING_SQL }}

|

PORTAL_RUNNER_CONNECTION_STRING: ${{ secrets.CONNECTION_STRING_SQL }}

|

||||||

MONGO_CONNECTION_STRING: ${{ secrets.CONNECTION_STRING_MONGO }}

|

MONGO_CONNECTION_STRING: ${{ secrets.CONNECTION_STRING_MONGO }}

|

||||||

CASSANDRA_CONNECTION_STRING: ${{ secrets.CONNECTION_STRING_CASSANDRA }}

|

CASSANDRA_CONNECTION_STRING: ${{ secrets.CONNECTION_STRING_CASSANDRA }}

|

||||||

|

TABLES_CONNECTION_STRING: ${{ secrets.CONNECTION_STRING_TABLE }}

|

||||||

|

DATA_EXPLORER_ENDPOINT: "https://localhost:1234/hostedExplorer.html"

|

||||||

|

- uses: actions/upload-artifact@v2

|

||||||

|

if: failure()

|

||||||

|

with:

|

||||||

|

name: screenshots

|

||||||

|

path: failed-*

|

||||||

nuget:

|

nuget:

|

||||||

name: Publish Nuget

|

name: Publish Nuget

|

||||||

if: github.ref == 'refs/heads/master' || contains(github.ref, 'hotfix/') || contains(github.ref, 'release/')

|

if: github.ref == 'refs/heads/master' || contains(github.ref, 'hotfix/') || contains(github.ref, 'release/')

|

||||||

needs: [lint, format, compile, build, unittest, endtoendemulator, endtoendpuppeteer]

|

needs: [lint, format, compile, build, unittest, endtoendemulator, endtoendhosted, accessibility]

|

||||||

runs-on: ubuntu-latest

|

runs-on: ubuntu-latest

|

||||||

env:

|

env:

|

||||||

NUGET_SOURCE: ${{ secrets.NUGET_SOURCE }}

|

NUGET_SOURCE: ${{ secrets.NUGET_SOURCE }}

|

||||||

@@ -177,7 +191,7 @@ jobs:

|

|||||||

nugetmpac:

|

nugetmpac:

|

||||||

name: Publish Nuget MPAC

|

name: Publish Nuget MPAC

|

||||||

if: github.ref == 'refs/heads/master' || contains(github.ref, 'hotfix/') || contains(github.ref, 'release/')

|

if: github.ref == 'refs/heads/master' || contains(github.ref, 'hotfix/') || contains(github.ref, 'release/')

|

||||||

needs: [lint, format, compile, build, unittest, endtoendemulator, endtoendpuppeteer]

|

needs: [lint, format, compile, build, unittest, endtoendemulator, endtoendhosted, accessibility]

|

||||||

runs-on: ubuntu-latest

|

runs-on: ubuntu-latest

|

||||||

env:

|

env:

|

||||||

NUGET_SOURCE: ${{ secrets.NUGET_SOURCE }}

|

NUGET_SOURCE: ${{ secrets.NUGET_SOURCE }}

|

||||||

|

|||||||

25

.github/workflows/runners.yml

vendored

25

.github/workflows/runners.yml

vendored

@@ -1,25 +0,0 @@

|

|||||||

name: Runners

|

|

||||||

on:

|

|

||||||

schedule:

|

|

||||||

- cron: "0 * 1 * *"

|

|

||||||

jobs:

|

|

||||||

sqlcreatecollection:

|

|

||||||

runs-on: ubuntu-latest

|

|

||||||

name: "SQL | Create Collection"

|

|

||||||

steps:

|

|

||||||

- uses: actions/checkout@v2

|

|

||||||

- uses: actions/setup-node@v1

|

|

||||||

- run: npm ci

|

|

||||||

- run: npm run test:e2e

|

|

||||||

env:

|

|

||||||

PORTAL_RUNNER_APP_INSIGHTS_KEY: ${{ secrets.PORTAL_RUNNER_APP_INSIGHTS_KEY }}

|

|

||||||

PORTAL_RUNNER_USERNAME: ${{ secrets.PORTAL_RUNNER_USERNAME }}

|

|

||||||

PORTAL_RUNNER_PASSWORD: ${{ secrets.PORTAL_RUNNER_PASSWORD }}

|

|

||||||

PORTAL_RUNNER_SUBSCRIPTION: 69e02f2d-f059-4409-9eac-97e8a276ae2c

|

|

||||||

PORTAL_RUNNER_RESOURCE_GROUP: runners

|

|

||||||

PORTAL_RUNNER_DATABASE_ACCOUNT: portal-sql-runner

|

|

||||||

- uses: actions/upload-artifact@v2

|

|

||||||

if: failure()

|

|

||||||

with:

|

|

||||||

name: screenshots

|

|

||||||

path: failure.png

|

|

||||||

3

.gitignore

vendored

3

.gitignore

vendored

@@ -9,9 +9,6 @@ pkg/DataExplorer/*

|

|||||||

test/out/*

|

test/out/*

|

||||||

workers/**/*.js

|

workers/**/*.js

|

||||||

*.trx

|

*.trx

|

||||||

cypress/videos

|

|

||||||

cypress/screenshots

|

|

||||||

cypress/fixtures

|

|

||||||

notebookapp/*

|

notebookapp/*

|

||||||

Contracts/*

|

Contracts/*

|

||||||

.DS_Store

|

.DS_Store

|

||||||

|

|||||||

BIN

.vs/slnx.sqlite

Normal file

BIN

.vs/slnx.sqlite

Normal file

Binary file not shown.

62

README.md

62

README.md

@@ -13,29 +13,18 @@ UI for Azure Cosmos DB. Powers the [Azure Portal](https://portal.azure.com/), ht

|

|||||||

|

|

||||||

### Watch mode

|

### Watch mode

|

||||||

|

|

||||||

Run `npm run watch` to start the development server and automatically rebuild on changes

|

Run `npm start` to start the development server and automatically rebuild on changes

|

||||||

|

|

||||||

### Specifying Development Platform

|

### Hosted Development (https://cosmos.azure.com)

|

||||||

|

|

||||||

Setting the environment variable `PLATFORM` during the build process will force the explorer to load the specified platform. By default in development it will run in `Hosted` mode. Valid options:

|

- Visit: `https://localhost:1234/hostedExplorer.html`

|

||||||

|

- Local sign in via AAD will NOT work. Connection string only in dev mode. Use the Portal if you need AAD auth.

|

||||||

- Hosted

|

- The default webpack dev server configuration will proxy requests to the production portal backend: `https://main.documentdb.ext.azure.com`. This will allow you to use production connection strings on your local machine.

|

||||||

- Emulator

|

|

||||||

- Portal

|

|

||||||

|

|

||||||

`PLATFORM=Emulator npm run watch`

|

|

||||||

|

|

||||||

### Hosted Development

|

|

||||||

|

|

||||||

The default webpack dev server configuration will proxy requests to the production portal backend: `https://main.documentdb.ext.azure.com`. This will allow you to use production connection strings on your local machine.

|

|

||||||

|

|

||||||

To run pure hosted mode, in `webpack.config.js` change index HtmlWebpackPlugin to use hostedExplorer.html and change entry for index to use HostedExplorer.ts.

|

|

||||||

|

|

||||||

### Emulator Development

|

### Emulator Development

|

||||||

|

|

||||||

In a window environment, running `npm run build` will automatically copy the built files from `/dist` over to the default emulator install paths. In a non-windows enironment you can specify an alternate endpoint using `EMULATOR_ENDPOINT` and webpack dev server will proxy requests for you.

|

- Start the Cosmos Emulator

|

||||||

|

- Visit: https://localhost:1234/index.html

|

||||||

`PLATFORM=Emulator EMULATOR_ENDPOINT=https://my-vm.azure.com:8081 npm run watch`

|

|

||||||

|

|

||||||

#### Setting up a Remote Emulator

|

#### Setting up a Remote Emulator

|

||||||

|

|

||||||

@@ -55,16 +44,8 @@ The Cosmos emulator currently only runs in Windows environments. You can still d

|

|||||||

|

|

||||||

### Portal Development

|

### Portal Development

|

||||||

|

|

||||||

The Cosmos Portal that consumes this repo is not currently open source. If you have access to this project, `npm run build` will copy the built files over to the portal where they will be loaded by the portal development environment

|

- Visit: https://ms.portal.azure.com/?dataExplorerSource=https%3A%2F%2Flocalhost%3A1234%2Fexplorer.html

|

||||||

|

- You may have to manually visit https://localhost:1234/explorer.html first and click through any SSL certificate warnings

|

||||||

You can however load a local running instance of data explorer in the production portal.

|

|

||||||

|

|

||||||

1. Turn off browser SSL validation for localhost: chrome://flags/#allow-insecure-localhost OR Install valid SSL certs for localhost (on IE, follow these [instructions](https://www.technipages.com/ie-bypass-problem-with-this-websites-security-certificate) to install the localhost certificate in the right place)

|

|

||||||

2. Whitelist `https://localhost:1234` domain for CORS in the Azure Cosmos DB portal

|

|

||||||

3. Start the project in portal mode: `PLATFORM=Portal npm run watch`

|

|

||||||

4. Load the portal using the following link: https://ms.portal.azure.com/?dataExplorerSource=https%3A%2F%2Flocalhost%3A1234%2Fexplorer.html

|

|

||||||

|

|

||||||

Live reload will occur, but data explorer will not properly integrate again with the parent iframe. You will have to manually reload the page.

|

|

||||||

|

|

||||||

### Testing

|

### Testing

|

||||||

|

|

||||||

@@ -76,24 +57,21 @@ Unit tests are located adjacent to the code under test and run with [Jest](https

|

|||||||

|

|

||||||

#### End to End CI Tests

|

#### End to End CI Tests

|

||||||

|

|

||||||

[Cypress](https://www.cypress.io/) is used for end to end tests and are contained in `cypress/`. Currently, it operates as sub project with its own typescript config and dependencies. It also only operates against the emulator. To run cypress tests:

|

Jest and Puppeteer are used for end to end browser based tests and are contained in `test/`. To run these tests locally:

|

||||||

|

|

||||||

1. Ensure the emulator is running

|

1. Copy .env.example to .env

|

||||||

2. Start cosmos explorer in emulator mode: `PLATFORM=Emulator npm run watch`

|

2. Update the values in .env including your local data explorer endpoint (ask a teammate/codeowner for help with .env values)

|

||||||

3. Move into `cypress/` folder: `cd cypress`

|

3. Make sure all packages are installed `npm install`

|

||||||

4. Install dependencies: `npm install`

|

4. Run the server `npm run start` and wait for it to start

|

||||||

5. Run cypress headless(`npm run test`) or in interactive mode(`npm run test:debug`)

|

5. Run `npm run test:e2e`

|

||||||

|

|

||||||

#### End to End Production Runners

|

|

||||||

|

|

||||||

Jest and Puppeteer are used for end to end production runners and are contained in `test/`. To run these tests locally:

|

|

||||||

|

|

||||||

1. Copy .env.example to .env and fill in all variables

|

|

||||||

2. Run `npm run test:e2e`

|

|

||||||

|

|

||||||

### Releasing

|

### Releasing

|

||||||

|

|

||||||

We generally adhear to the release strategy [documented by the Azure SDK Guidelines](https://azure.github.io/azure-sdk/policies_repobranching.html#release-branches). Most releases should happen from the master branch. If master contains commits that cannot be released, you may create a release from a `release/` or `hotfix/` branch. See linked documentation for more details.

|

We generally adhere to the release strategy [documented by the Azure SDK Guidelines](https://azure.github.io/azure-sdk/policies_repobranching.html#release-branches). Most releases should happen from the master branch. If master contains commits that cannot be released, you may create a release from a `release/` or `hotfix/` branch. See linked documentation for more details.

|

||||||

|

|

||||||

|

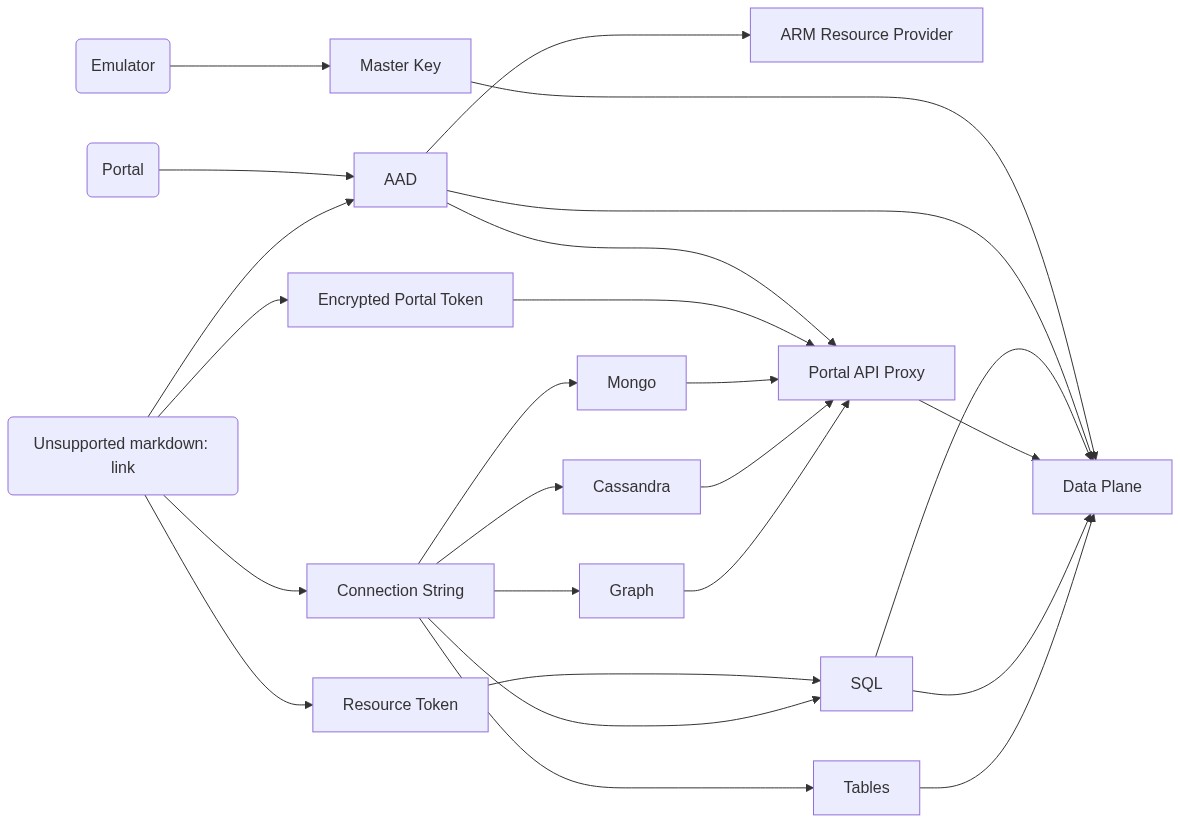

### Architechture

|

||||||

|

|

||||||

|

[](https://mermaid-js.github.io/mermaid-live-editor/#/edit/eyJjb2RlIjoiZ3JhcGggTFJcbiAgaG9zdGVkKGh0dHBzOi8vY29zbW9zLmF6dXJlLmNvbSlcbiAgcG9ydGFsKFBvcnRhbClcbiAgZW11bGF0b3IoRW11bGF0b3IpXG4gIGFhZFtBQURdXG4gIHJlc291cmNlVG9rZW5bUmVzb3VyY2UgVG9rZW5dXG4gIGNvbm5lY3Rpb25TdHJpbmdbQ29ubmVjdGlvbiBTdHJpbmddXG4gIHBvcnRhbFRva2VuW0VuY3J5cHRlZCBQb3J0YWwgVG9rZW5dXG4gIG1hc3RlcktleVtNYXN0ZXIgS2V5XVxuICBhcm1bQVJNIFJlc291cmNlIFByb3ZpZGVyXVxuICBkYXRhcGxhbmVbRGF0YSBQbGFuZV1cbiAgcHJveHlbUG9ydGFsIEFQSSBQcm94eV1cbiAgc3FsW1NRTF1cbiAgbW9uZ29bTW9uZ29dXG4gIHRhYmxlc1tUYWJsZXNdXG4gIGNhc3NhbmRyYVtDYXNzYW5kcmFdXG4gIGdyYWZbR3JhcGhdXG5cblxuICBlbXVsYXRvciAtLT4gbWFzdGVyS2V5IC0tLS0-IGRhdGFwbGFuZVxuICBwb3J0YWwgLS0-IGFhZFxuICBob3N0ZWQgLS0-IHBvcnRhbFRva2VuICYgcmVzb3VyY2VUb2tlbiAmIGNvbm5lY3Rpb25TdHJpbmcgJiBhYWRcbiAgYWFkIC0tLT4gYXJtXG4gIGFhZCAtLS0-IGRhdGFwbGFuZVxuICBhYWQgLS0tPiBwcm94eVxuICByZXNvdXJjZVRva2VuIC0tLT4gc3FsIC0tPiBkYXRhcGxhbmVcbiAgcG9ydGFsVG9rZW4gLS0tPiBwcm94eVxuICBwcm94eSAtLT4gZGF0YXBsYW5lXG4gIGNvbm5lY3Rpb25TdHJpbmcgLS0-IHNxbCAmIG1vbmdvICYgY2Fzc2FuZHJhICYgZ3JhZiAmIHRhYmxlc1xuICBzcWwgLS0-IGRhdGFwbGFuZVxuICB0YWJsZXMgLS0-IGRhdGFwbGFuZVxuICBtb25nbyAtLT4gcHJveHlcbiAgY2Fzc2FuZHJhIC0tPiBwcm94eVxuICBncmFmIC0tPiBwcm94eVxuXG5cdFx0IiwibWVybWFpZCI6eyJ0aGVtZSI6ImRlZmF1bHQifSwidXBkYXRlRWRpdG9yIjpmYWxzZX0)

|

||||||

|

|

||||||

# Contributing

|

# Contributing

|

||||||

|

|

||||||

|

|||||||

@@ -1,3 +1,4 @@

|

|||||||

module.exports = {

|

module.exports = {

|

||||||

presets: [["@babel/preset-env", { targets: { node: "current" } }], "@babel/preset-react", "@babel/preset-typescript"]

|

presets: [["@babel/preset-env", { targets: { node: "current" } }], "@babel/preset-react", "@babel/preset-typescript"],

|

||||||

|

plugins: [["@babel/plugin-proposal-decorators", { legacy: true }]],

|

||||||

};

|

};

|

||||||

|

|||||||

7

canvas/README.md

Normal file

7

canvas/README.md

Normal file

@@ -0,0 +1,7 @@

|

|||||||

|

# Why?

|

||||||

|

|

||||||

|

This adds a mock module for `canvas`. Nteract has a ignored require and undeclared dependency on this module. `cavnas` is a server side node module and is not used in browser side code for nteract.

|

||||||

|

|

||||||

|

Installing it locally (`npm install canvas`) will resolve the problem, but it is a native module so it is flaky depending on the system, node version, processor arch, etc. This module provides a simpler, more robust solution.

|

||||||

|

|

||||||

|

Remove this workaround if [this bug](https://github.com/nteract/any-vega/issues/2) ever gets resolved

|

||||||

1

canvas/index.js

Normal file

1

canvas/index.js

Normal file

@@ -0,0 +1 @@

|

|||||||

|

module.exports = {}

|

||||||

11

canvas/package.json

Normal file

11

canvas/package.json

Normal file

@@ -0,0 +1,11 @@

|

|||||||

|

{

|

||||||

|

"name": "canvas",

|

||||||

|

"version": "1.0.0",

|

||||||

|

"description": "",

|

||||||

|

"main": "index.js",

|

||||||

|

"scripts": {

|

||||||

|

"test": "echo \"Error: no test specified\" && exit 1"

|

||||||

|

},

|

||||||

|

"author": "",

|

||||||

|

"license": "ISC"

|

||||||

|

}

|

||||||

4

cypress/.gitignore

vendored

4

cypress/.gitignore

vendored

@@ -1,4 +0,0 @@

|

|||||||

cypress.env.json

|

|

||||||

cypress/report

|

|

||||||

cypress/screenshots

|

|

||||||

cypress/videos

|

|

||||||

@@ -1,51 +0,0 @@

|

|||||||

// Cleans up old databases from previous test runs

|

|

||||||

const { CosmosClient } = require("@azure/cosmos");

|

|

||||||

|

|

||||||

// TODO: Add support for other API connection strings

|

|

||||||

const mongoRegex = RegExp("mongodb://.*:(.*)@(.*).mongo.cosmos.azure.com");

|

|

||||||

|

|

||||||

async function cleanup() {

|

|

||||||

const connectionString = process.env.CYPRESS_CONNECTION_STRING;

|

|

||||||

if (!connectionString) {

|

|

||||||

throw new Error("Connection string not provided");

|

|

||||||

}

|

|

||||||

|

|

||||||

let client;

|

|

||||||

switch (true) {

|

|

||||||

case connectionString.includes("mongodb://"): {

|

|

||||||

const [, key, accountName] = connectionString.match(mongoRegex);

|

|

||||||

client = new CosmosClient({

|

|

||||||

key,

|

|

||||||

endpoint: `https://${accountName}.documents.azure.com:443/`

|

|

||||||

});

|

|

||||||

break;

|

|

||||||

}

|

|

||||||

// TODO: Add support for other API connection strings

|

|

||||||

default:

|

|

||||||

client = new CosmosClient(connectionString);

|

|

||||||

break;

|

|

||||||

}

|

|

||||||

|

|

||||||

const response = await client.databases.readAll().fetchAll();

|

|

||||||

return Promise.all(

|

|

||||||

response.resources.map(async db => {

|

|

||||||

const dbTimestamp = new Date(db._ts * 1000);

|

|

||||||

const twentyMinutesAgo = new Date(Date.now() - 1000 * 60 * 20);

|

|

||||||

if (dbTimestamp < twentyMinutesAgo) {

|

|

||||||

await client.database(db.id).delete();

|

|

||||||

console.log(`DELETED: ${db.id} | Timestamp: ${dbTimestamp}`);

|

|

||||||

} else {

|

|

||||||

console.log(`SKIPPED: ${db.id} | Timestamp: ${dbTimestamp}`);

|

|

||||||

}

|

|

||||||

})

|

|

||||||

);

|

|

||||||

}

|

|

||||||

|

|

||||||

cleanup()

|

|

||||||

.then(() => {

|

|

||||||

process.exit(0);

|

|

||||||

})

|

|

||||||

.catch(error => {

|

|

||||||

console.error(error);

|

|

||||||

process.exit(1);

|

|

||||||

});

|

|

||||||

@@ -1,15 +0,0 @@

|

|||||||

{

|

|

||||||

"integrationFolder": "./integration",

|

|

||||||

"pluginsFile": false,

|

|

||||||

"fixturesFolder": false,

|

|

||||||

"supportFile": "./support/index.js",

|

|

||||||

"defaultCommandTimeout": 90000,

|

|

||||||

"chromeWebSecurity": false,

|

|

||||||

"reporter": "mochawesome",

|

|

||||||

"reporterOptions": {

|

|

||||||

"reportDir": "cypress/report",

|

|

||||||

"json": true,

|

|

||||||

"overwrite": false,

|

|

||||||

"html": false

|

|

||||||

}

|

|

||||||

}

|

|

||||||

@@ -1,66 +0,0 @@

|

|||||||

// 1. Click on "New Container" on the command bar.

|

|

||||||

// 2. Pane with the title "Add Container" should appear on the right side of the screen

|

|

||||||

// 3. It includes an input box for the database Id.

|

|

||||||

// 4. It includes a checkbox called "Create now".

|

|

||||||

// 5. When the checkbox is marked, enter new database id.

|

|

||||||

// 3. Create a database WITH "Provision throughput" checked.

|

|

||||||

// 4. Enter minimum throughput value of 400.

|

|

||||||

// 5. Enter container id to the container id text box.

|

|

||||||

// 6. Enter partition key to the partition key text box.

|

|

||||||

// 7. Click "OK" to create a new container.

|

|

||||||

// 8. Verify the new container is created along with the database id and should appead in the Data Explorer list in the left side of the screen.

|

|

||||||

|

|

||||||

const connectionString = require("../../../utilities/connectionString");

|

|

||||||

|

|

||||||

let crypt = require("crypto");

|

|

||||||

|

|

||||||

context("Cassandra API Test - createDatabase", () => {

|

|

||||||

beforeEach(() => {

|

|

||||||

connectionString.loginUsingConnectionString(connectionString.constants.cassandra);

|

|

||||||

});

|

|

||||||

|

|

||||||

it("Create a new table in Cassandra API", () => {

|

|

||||||

const keyspaceId = `KeyspaceId${crypt.randomBytes(8).toString("hex")}`;

|

|

||||||

const tableId = `TableId112`;

|

|

||||||

|

|

||||||

cy.get("iframe").then($element => {

|

|

||||||

const $body = $element.contents().find("body");

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('div[class="commandBarContainer"]')

|

|

||||||

.should("be.visible")

|

|

||||||

.find('button[data-test="New Table"]')

|

|

||||||

.should("be.visible")

|

|

||||||

.click();

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('div[class="contextual-pane-in"]')

|

|

||||||

.should("be.visible")

|

|

||||||

.find('span[id="containerTitle"]');

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('input[id="keyspace-id"]')

|

|

||||||

.should("be.visible")

|

|

||||||

.type(keyspaceId);

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('input[class="textfontclr"]')

|

|

||||||

.type(tableId);

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('input[data-test="databaseThroughputValue"]')

|

|

||||||

.should("have.value", "400");

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('data-test="addCollection-createCollection"')

|

|

||||||

.click();

|

|

||||||

|

|

||||||

cy.wait(10000);

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('div[data-test="resourceTreeId"]')

|

|

||||||

.should("exist")

|

|

||||||

.find('div[class="treeComponent dataResourceTree"]')

|

|

||||||

.should("contain", tableId);

|

|

||||||

});

|

|

||||||

});

|

|

||||||

});

|

|

||||||

@@ -1,81 +0,0 @@

|

|||||||

// 1. Click on "New Graph" on the command bar.

|

|

||||||

// 2. Pane with the title "Add Container" should appear on the right side of the screen

|

|

||||||

// 3. It includes an input box for the database Id.

|

|

||||||

// 4. It includes a checkbox called "Create now".

|

|

||||||

// 5. When the checkbox is marked, enter new database id.

|

|

||||||

// 3. Create a database WITH "Provision throughput" checked.

|

|

||||||

// 4. Enter minimum throughput value of 400.

|

|

||||||

// 5. Enter container id to the container id text box.

|

|

||||||

// 6. Enter partition key to the partition key text box.

|

|

||||||

// 7. Click "OK" to create a new container.

|

|

||||||

// 8. Verify the new container is created along with the database id and should appead in the Data Explorer list in the left side of the screen.

|

|

||||||

|

|

||||||

const connectionString = require("../../../utilities/connectionString");

|

|

||||||

|

|

||||||

let crypt = require("crypto");

|

|

||||||

|

|

||||||

context("Graph API Test", () => {

|

|

||||||

beforeEach(() => {

|

|

||||||

connectionString.loginUsingConnectionString(connectionString.constants.graph);

|

|

||||||

});

|

|

||||||

|

|

||||||

it("Create a new graph in Graph API", () => {

|

|

||||||

const dbId = `TestDatabase${crypt.randomBytes(8).toString("hex")}`;

|

|

||||||

const graphId = `TestGraph${crypt.randomBytes(8).toString("hex")}`;

|

|

||||||

const partitionKey = `SharedKey${crypt.randomBytes(8).toString("hex")}`;

|

|

||||||

|

|

||||||

cy.get("iframe").then($element => {

|

|

||||||

const $body = $element.contents().find("body");

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('div[class="commandBarContainer"]')

|

|

||||||

.should("be.visible")

|

|

||||||

.find('button[data-test="New Graph"]')

|

|

||||||

.should("be.visible")

|

|

||||||

.click();

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('div[class="contextual-pane-in"]')

|

|

||||||

.should("be.visible")

|

|

||||||

.find('span[id="containerTitle"]');

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('input[data-test="addCollection-createNewDatabase"]')

|

|

||||||

.check();

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('input[data-test="addCollection-newDatabaseId"]')

|

|

||||||

.should("be.visible")

|

|

||||||

.type(dbId);

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('input[data-test="addCollectionPane-databaseSharedThroughput"]')

|

|

||||||

.check();

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('input[data-test="databaseThroughputValue"]')

|

|

||||||

.should("have.value", "400");

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('input[data-test="addCollection-collectionId"]')

|

|

||||||

.type(graphId);

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('input[data-test="addCollection-partitionKeyValue"]')

|

|

||||||

.type(partitionKey);

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('input[data-test="addCollection-createCollection"]')

|

|

||||||

.click();

|

|

||||||

|

|

||||||

cy.wait(10000);

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('div[data-test="resourceTreeId"]')

|

|

||||||

.should("exist")

|

|

||||||

.find('div[class="treeComponent dataResourceTree"]')

|

|

||||||

.should("contain", dbId)

|

|

||||||

.click()

|

|

||||||

.should("contain", graphId);

|

|

||||||

});

|

|

||||||

});

|

|

||||||

});

|

|

||||||

@@ -1,80 +0,0 @@

|

|||||||

// 1. Click on "New Container" on the command bar.

|

|

||||||

// 2. Pane with the title "Add Container" should appear on the right side of the screen

|

|

||||||

// 3. It includes an input box for the database Id.

|

|

||||||

// 4. It includes a checkbox called "Create now".

|

|

||||||

// 5. When the checkbox is marked, enter new database id.

|

|

||||||

// 3. Create a database WITH "Provision throughput" checked.

|

|

||||||

// 4. Enter minimum throughput value of 400.

|

|

||||||

// 5. Enter container id to the container id text box.

|

|

||||||

// 6. Enter partition key to the partition key text box.

|

|

||||||

// 7. Click "OK" to create a new container.

|

|

||||||

// // 8. Verify the new container is created along with the database id and should appead in the Data Explorer list in the left side of the screen.

|

|

||||||

|

|

||||||

const connectionString = require("../../../utilities/connectionString");

|

|

||||||

|

|

||||||

let crypt = require("crypto");

|

|

||||||

|

|

||||||

context("Mongo API Test - createDatabase", () => {

|

|

||||||

beforeEach(() => {

|

|

||||||

connectionString.loginUsingConnectionString();

|

|

||||||

});

|

|

||||||

|

|

||||||

it("Create a new collection in Mongo API", () => {

|

|

||||||

const dbId = `TestDatabase${crypt.randomBytes(8).toString("hex")}`;

|

|

||||||

const collectionId = `TestCollection${crypt.randomBytes(8).toString("hex")}`;

|

|

||||||

const sharedKey = `SharedKey${crypt.randomBytes(8).toString("hex")}`;

|

|

||||||

|

|

||||||

cy.get("iframe").then($element => {

|

|

||||||

const $body = $element.contents().find("body");

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('div[class="commandBarContainer"]')

|

|

||||||

.should("be.visible")

|

|

||||||

.find('button[data-test="New Collection"]')

|

|

||||||

.should("be.visible")

|

|

||||||

.click();

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('div[class="contextual-pane-in"]')

|

|

||||||

.should("be.visible")

|

|

||||||

.find('span[id="containerTitle"]');

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('input[data-test="addCollection-createNewDatabase"]')

|

|

||||||

.check();

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('input[data-test="addCollection-newDatabaseId"]')

|

|

||||||

.type(dbId);

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('input[data-test="addCollectionPane-databaseSharedThroughput"]')

|

|

||||||

.check();

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('input[data-test="addCollection-collectionId"]')

|

|

||||||

.type(collectionId);

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('input[data-test="databaseThroughputValue"]')

|

|

||||||

.should("have.value", "400");

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('input[data-test="addCollection-partitionKeyValue"]')

|

|

||||||

.type(sharedKey);

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find("#submitBtnAddCollection")

|

|

||||||

.click();

|

|

||||||

|

|

||||||

cy.wait(10000);

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('div[data-test="resourceTreeId"]')

|

|

||||||

.should("exist")

|

|

||||||

.find('div[class="treeComponent dataResourceTree"]')

|

|

||||||

.should("contain", dbId)

|

|

||||||

.click()

|

|

||||||

.should("contain", collectionId);

|

|

||||||

});

|

|

||||||

});

|

|

||||||

});

|

|

||||||

@@ -1,96 +0,0 @@

|

|||||||

// 1. Click on "New Container" on the command bar.

|

|

||||||

// 2. Pane with the title "Add Container" should appear on the right side of the screen

|

|

||||||

// 3. It includes an input box for the database Id.

|

|

||||||

// 4. It includes a checkbox called "Create now".

|

|

||||||

// 5. When the checkbox is marked, enter new database id.

|

|

||||||

// 3. Create a database WITH "Provision throughput" checked.

|

|

||||||

// 4. Enter minimum throughput value of 400.

|

|

||||||

// 5. Enter container id to the container id text box.

|

|

||||||

// 6. Enter partition key to the partition key text box.

|

|

||||||

// 7. Click "OK" to create a new container.

|

|

||||||

// 8. Verify the new container is created along with the database id and should appead in the Data Explorer list in the left side of the screen.

|

|

||||||

|

|

||||||

const connectionString = require("../../../utilities/connectionString");

|

|

||||||

|

|

||||||

let crypt = require("crypto");

|

|

||||||

|

|

||||||

context("Mongo API Test", () => {

|

|

||||||

beforeEach(() => {

|

|

||||||

connectionString.loginUsingConnectionString();

|

|

||||||

});

|

|

||||||

|

|

||||||

it.skip("Create a new collection in Mongo API - Autopilot", () => {

|

|

||||||

const dbId = `TestDatabase${crypt.randomBytes(8).toString("hex")}`;

|

|

||||||

const collectionId = `TestCollection${crypt.randomBytes(8).toString("hex")}`;

|

|

||||||

const sharedKey = `SharedKey${crypt.randomBytes(8).toString("hex")}`;

|

|

||||||

|

|

||||||

cy.get("iframe").then($element => {

|

|

||||||

const $body = $element.contents().find("body");

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('div[class="commandBarContainer"]')

|

|

||||||

.should("be.visible")

|

|

||||||

.find('button[data-test="New Collection"]')

|

|

||||||

.should("be.visible")

|

|

||||||

.click();

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('div[class="contextual-pane-in"]')

|

|

||||||

.should("be.visible")

|

|

||||||

.find('span[id="containerTitle"]');

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('input[data-test="addCollection-createNewDatabase"]')

|

|

||||||

.check();

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('input[data-test="addCollection-newDatabaseId"]')

|

|

||||||

.type(dbId);

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('input[data-test="addCollectionPane-databaseSharedThroughput"]')

|

|

||||||

.check();

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('div[class="throughputModeContainer"]')

|

|

||||||

.should("be.visible")

|

|

||||||

.and(input => {

|

|

||||||

expect(input.get(0).textContent, "first item").contains("Autopilot (preview)");

|

|

||||||

expect(input.get(1).textContent, "second item").contains("Manual");

|

|

||||||

});

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('input[id="newContainer-databaseThroughput-autoPilotRadio"]')

|

|

||||||

.check();

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('select[name="autoPilotTiers"]')

|

|

||||||

// .eq(1).should('contain', '4,000 RU/s');

|

|

||||||

// // .select('4,000 RU/s').should('have.value', '1');

|

|

||||||

|

|

||||||

.find('option[value="2"]')

|

|

||||||

.then($element => $element.get(1).setAttribute("selected", "selected"));

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('input[data-test="addCollection-collectionId"]')

|

|

||||||

.type(collectionId);

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('input[data-test="addCollection-partitionKeyValue"]')

|

|

||||||

.type(sharedKey);

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('input[data-test="addCollection-createCollection"]')

|

|

||||||

.click();

|

|

||||||

|

|

||||||

cy.wait(10000);

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('div[data-test="resourceTreeId"]')

|

|

||||||

.should("exist")

|

|

||||||

.find('div[class="treeComponent dataResourceTree"]')

|

|

||||||

.should("contain", dbId)

|

|

||||||

.click()

|

|

||||||

.should("contain", collectionId);

|

|

||||||

});

|

|

||||||

});

|

|

||||||

});

|

|

||||||

@@ -1,67 +0,0 @@

|

|||||||

const connectionString = require("../../../utilities/connectionString");

|

|

||||||

|

|

||||||

let crypt = require("crypto");

|

|

||||||

|

|

||||||

context("Mongo API Test", () => {

|

|

||||||

beforeEach(() => {

|

|

||||||

connectionString.loginUsingConnectionString();

|

|

||||||

});

|

|

||||||

|

|

||||||

it.skip("Create a new collection in existing database in Mongo API", () => {

|

|

||||||

const collectionId = `TestCollection${crypt.randomBytes(8).toString("hex")}`;

|

|

||||||

const sharedKey = `SharedKey${crypt.randomBytes(8).toString("hex")}`;

|

|

||||||

|

|

||||||

cy.get("iframe").then($element => {

|

|

||||||

const $body = $element.contents().find("body");

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('span[class="nodeLabel"]')

|

|

||||||

.should("be.visible")

|

|

||||||

.then($span => {

|

|

||||||

const dbId1 = $span.text();

|

|

||||||

cy.log("DBBB", dbId1);

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('div[class="commandBarContainer"]')

|

|

||||||

.should("be.visible")

|

|

||||||

.find('button[data-test="New Collection"]')

|

|

||||||

.should("be.visible")

|

|

||||||

.click();

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('div[class="contextual-pane-in"]')

|

|

||||||

.should("be.visible")

|

|

||||||

.find('span[id="containerTitle"]');

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('input[data-test="addCollection-existingDatabase"]')

|

|

||||||

.check();

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('input[data-test="addCollection-existingDatabase"]')

|

|

||||||

.type(dbId1);

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('input[data-test="addCollection-collectionId"]')

|

|

||||||

.type(collectionId);

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('input[data-test="addCollection-partitionKeyValue"]')

|

|

||||||

.type(sharedKey);

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('input[data-test="addCollection-createCollection"]')

|

|

||||||

.click();

|

|

||||||

|

|

||||||

cy.wait(10000);

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('div[data-test="resourceTreeId"]')

|

|

||||||

.should("exist")

|

|

||||||

.find('div[class="treeComponent dataResourceTree"]')

|

|

||||||

.click()

|

|

||||||

.should("contain", collectionId);

|

|

||||||

});

|

|

||||||

});

|

|

||||||

});

|

|

||||||

});

|

|

||||||

@@ -1,203 +0,0 @@

|

|||||||

const connectionString = require("../../../utilities/connectionString");

|

|

||||||

|

|

||||||

let crypt = require("crypto");

|

|

||||||

|

|

||||||

context.skip("Mongo API Test", () => {

|

|

||||||

beforeEach(() => {

|

|

||||||

connectionString.loginUsingConnectionString();

|

|

||||||

});

|

|

||||||

|

|

||||||

it("Create a new collection in Mongo API - Provision database throughput", () => {

|

|

||||||

const dbId = `TestDatabase${crypt.randomBytes(8).toString("hex")}`;

|

|

||||||

const collectionId = `TestCollection${crypt.randomBytes(8).toString("hex")}`;

|

|

||||||

const sharedKey = `SharedKey${crypt.randomBytes(8).toString("hex")}`;

|

|

||||||

|

|

||||||

cy.get("iframe").then($element => {

|

|

||||||

const $body = $element.contents().find("body");

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('div[class="commandBarContainer"]')

|

|

||||||

.should("be.visible")

|

|

||||||

.find('button[data-test="New Collection"]')

|

|

||||||

.should("be.visible")

|

|

||||||

.click();

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('div[class="contextual-pane-in"]')

|

|

||||||

.should("be.visible")

|

|

||||||

.find('span[id="containerTitle"]');

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find(".createNewDatabaseOrUseExisting")

|

|

||||||

.should("have.length", 2)

|

|

||||||

.and(input => {

|

|

||||||

expect(input.get(0).textContent, "first item").contains("Create new");

|

|

||||||

expect(input.get(1).textContent, "second item").contains("Use existing");

|

|

||||||

});

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('input[data-test="addCollection-createNewDatabase"]')

|

|

||||||

.check();

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('input[data-test="addCollectionPane-databaseSharedThroughput"]')

|

|

||||||

.check();

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('input[data-test="addCollection-newDatabaseId"]')

|

|

||||||

.type(dbId);

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('input[data-test="addCollectionPane-databaseSharedThroughput"]')

|

|

||||||

.check();

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('input[data-test="databaseThroughputValue"]')

|

|

||||||

.should("have.value", "400");

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('input[data-test="addCollection-collectionId"]')

|

|

||||||

.type(collectionId);

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('input[data-test="addCollection-partitionKeyValue"]')

|

|

||||||

.type(sharedKey);

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('input[data-test="addCollection-createCollection"]')

|

|

||||||

.click();

|

|

||||||

|

|

||||||

cy.wait(10000);

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('div[data-test="resourceTreeId"]')

|

|

||||||

.should("exist")

|

|

||||||

.find('div[class="treeComponent dataResourceTree"]')

|

|

||||||

.should("contain", dbId)

|

|

||||||

.click()

|

|

||||||

.should("contain", collectionId);

|

|

||||||

});

|

|

||||||

});

|

|

||||||

|

|

||||||

it("Create a new collection - without provision database throughput", () => {

|

|

||||||

const dbId = `TestDatabase${crypt.randomBytes(8).toString("hex")}`;

|

|

||||||

const collectionId = `TestCollection${crypt.randomBytes(8).toString("hex")}`;

|

|

||||||

const collectionIdTitle = `Add Collection`;

|

|

||||||

const sharedKey = `SharedKey${crypt.randomBytes(8).toString("hex")}`;

|

|

||||||

|

|

||||||

cy.get("iframe").then($element => {

|

|

||||||

const $body = $element.contents().find("body");

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('div[class="commandBarContainer"]')

|

|

||||||

.should("be.visible")

|

|

||||||

.find('button[data-test="New Collection"]')

|

|

||||||

.should("be.visible")

|

|

||||||

.click();

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('div[class="contextual-pane-in"]')

|

|

||||||

.should("be.visible")

|

|

||||||

.find('span[id="containerTitle"]');

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('input[data-test="addCollection-createNewDatabase"]')

|

|

||||||

.check();

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('input[data-test="addCollection-newDatabaseId"]')

|

|

||||||

.type(dbId);

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('input[data-test="addCollectionPane-databaseSharedThroughput"]')

|

|

||||||

.uncheck();

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('input[data-test="addCollection-collectionId"]')

|

|

||||||

.type(collectionId);

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('input[id="tab2"]')

|

|

||||||

.check({ force: true });

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('input[data-test="addCollection-partitionKeyValue"]')

|

|

||||||

.type(sharedKey);

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('input[data-test="databaseThroughputValue"]')

|

|

||||||

.should("have.value", "400");

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('input[data-test="addCollection-createCollection"]')

|

|

||||||

.click();

|

|

||||||

|

|

||||||

cy.wait(10000);

|

|

||||||

|

|

||||||

cy.wrap($body)

|

|

||||||

.find('div[data-test="resourceTreeId"]')

|

|

||||||

.should("exist")

|

|

||||||

.find('div[class="treeComponent dataResourceTree"]')

|

|

||||||

.should("contain", dbId)

|

|

||||||

.click()

|

|

||||||

.should("contain", collectionId);

|

|

||||||

});

|

|

||||||

});

|

|

||||||

|

|

||||||